I’ve already done quite an extensive write-up on the best graphics cards for LLM inference and training this year, but this time I’ve decided to compile an even more versatile resource for all of you who dabble with local and private AI software including image generation WebUI’s, AI vocal cover tools, LLM roleplay chat interfaces and much more. Let’s get straight into it!

This website is reader-supported and is a part of the AliExpress Partner Program, Amazon Services LLC Associates Program and the eBay Partner Network. When you buy using links on our site, we may earn an affiliate commission!

Updated for March 2026: The guide was re-checked against current GeForce, Radeon, Ollama, ROCm, and ComfyUI support. The AMD section was updated to reflect improved local-AI compatibility, and the recommendations were tightened around value, VRAM, and real-world local AI workflows.

Who Is This List For?

Well, the first thing that I need to clarify is that this guide will work best for those of you who:

- Want to further train Stable Diffusion checkpoints or fine-tunes like LoRA and LyCORIS.

- Need a GPU for training LLM models in a home environment, on a single home PC (again, including LoRA fine-tunings for text generation models).

- Are interested in efficiently training RVC voice models for making AI vocal covers, or fine-tuning models like for instance xtts, for higher quality voice cloning.

- …and are looking for a new graphics card that will also perform the best for model inference in many different contexts, including the ones mentioned above.

If you’re more geared toward training custom AI models from the standpoint of a machine learning enthusiast or data engineer, you will also find these GPUs quite useful, although there are other, possibly more interesting solutions for you, such as running multiple graphics cards in a multi-GPU workstation configuration. That, however, is a topic for a whole new article. So now, let’s get to one last thing that needs to be said before we get to the list itself.

You might also want to check out: 7 Best AMD Motherboards For Dual GPU LLM Builds

Which Parameters Really Matter When Picking a GPU For Training AI Models?

The amount of VRAM, max clock speed, cooling efficiency and overall benchmark performance. That’s it. Let’s quickly explain all of these, and why do they matter in this particular context.

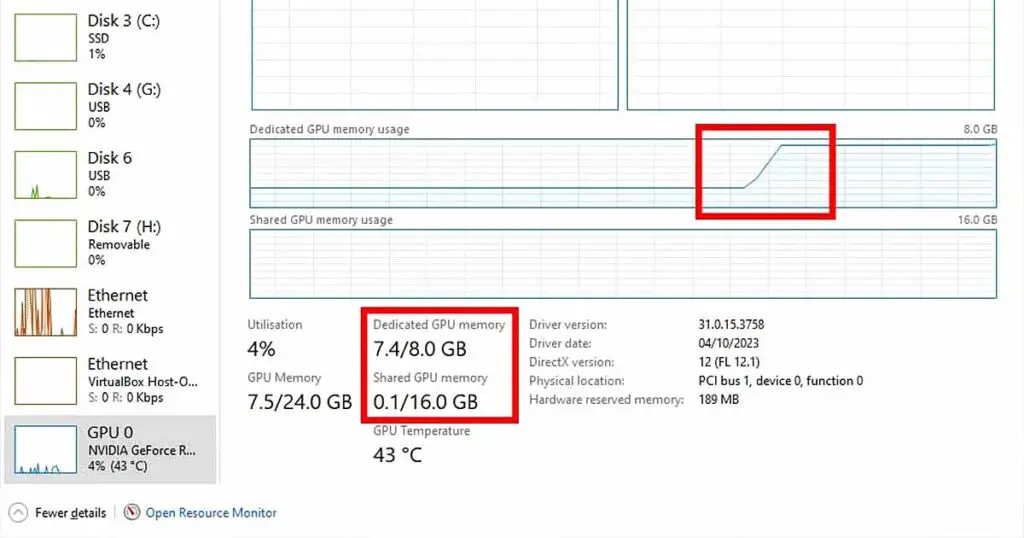

- VRAM – For training AI models, fine-tuning and doing any calculations on large batches of data at the same time efficiently, you need as much VRAM as you can get. In general, you don’t want less than 16GB of video memory, and the 24GB models would be ideal if only you can afford to get them. While for instance when training LoRA models for Stable Diffusion having less VRAM can just make the process much slower than it would be if you were able to process larger data batches at once, when using open-source large language models on your system the lack of VRAM can completely lock you out from being able to use many larger sized higher quality text generation models.

- Max clock speed – This is pretty self-explanatory: the faster the GPU can make calculations, the faster you can get through the model training process, however this takes us straight to the next point.

- Cooling efficiency – Fast calculation equals generating a large amount of heat that needs to be dissipated. Once the GPU hits its thermal limit and isn’t able to cool itself quickly enough, the thermal throttling starts – the main clock slows down, so as not to cook up your precious piece of smart metal. My take is: try to always aim for 3 fan GPUs with cooling efficiency benchmark proof. In my experience when it comes to graphics cards mentioned in this list (which are pretty much the top shelf), cooling is hardly ever an issue.

- Overall benchmark performance – Once again, whenever in doubt, check the benchmark tests which luckily these days are available all over the internet. These are useful mostly when comparing two high end cards with each other.

These very same things do matter equally for AI model inference, so in your case, making use of the generative AI software to quickly get your desired outputs.

With that out of the way, let’s get to the actual list. Feel free to use the orange buttons to check the prices of the mentioned GPUs. If you’re interested in used models, eBay is one of the best places to get an idea of the current price of a second-hand GPU unit, so I’ve included these links as well.

If you’re wondering why this list is still mostly NVIDIA-focused, the short version is software friction. NVIDIA remains the easiest plug-and-play option for local AI, especially across CUDA-first tools. AMD is much more viable than it used to be, though, so I cover that properly in the addendum at the end of this guide.

1. NVIDIA GeForce RTX 5090 32GB

The NVIDIA GeForce RTX 5090 32GB since its release sits at the top of the food chain when it comes to consumer GPUs, and unsurprisingly, its price tag does reflect that. With 32GB of GDDR7 VRAM, it’s a real powerhouse for AI related workloads, especially if you’re running large language models locally on your system.

Thanks to its massive raw performance uplift paired with the DLSS 4.0 capabilities, it dominates in the gaming field as well. The rest of the RTX 5xxx series lineup—the RTX 5080, RTX 5070 Ti, and RTX 5070 offer 16GB and 12GB of VRAM, which still makes them decent options, but the absence of a 24GB model in this generation is a real disappointment for me and many local AI software enthusiasts.

2. NVIDIA GeForce RTX 5080 16GB & RTX 5070 Ti 16GB

The NVIDIA GeForce RTX 5080 and RTX 5070 Ti are neck and neck in terms of performance. While the 5070 Ti isn’t quite as powerful as the 5080, the gap is relatively small. The RTX 5080 pulls ahead mainly thanks to its higher CUDA core count and greater memory bandwidth— objectively making it the better choice from the two for those who need faster training speeds and better efficiency in AI frameworks.

As for the base RTX 5070 12GB, the RTX 4090, which we’ll cover next easily surpasses it in raw computing power, making it a much faster and more capable GPU in most contexts. In the context of local AI applications, it is better compared to the RTX 4080 and the 4070 Ti.

3. NVIDIA GeForce RTX 4090 24GB

- With the lack of 24GB cards in the 5th gen, the 4090 is still a great choice for locally hosted LLMs.

- Great performance when it comes to model training.

- Raw benchmark scores better than that of the base 5070.

- It’s still quite expensive.

- The performance jump from the base 4080 is significant, although it’s debatable whether the upgrade is worth it.

The NVIDIA GeForce RTX 4090 comes with 24GB of VRAM on board, which is currently the second highest value you can expect from consumer GPUs. If you have the money to spare, this is still the go-to card that you want to go for if you want raw performance surpassing the new RTX 5070.

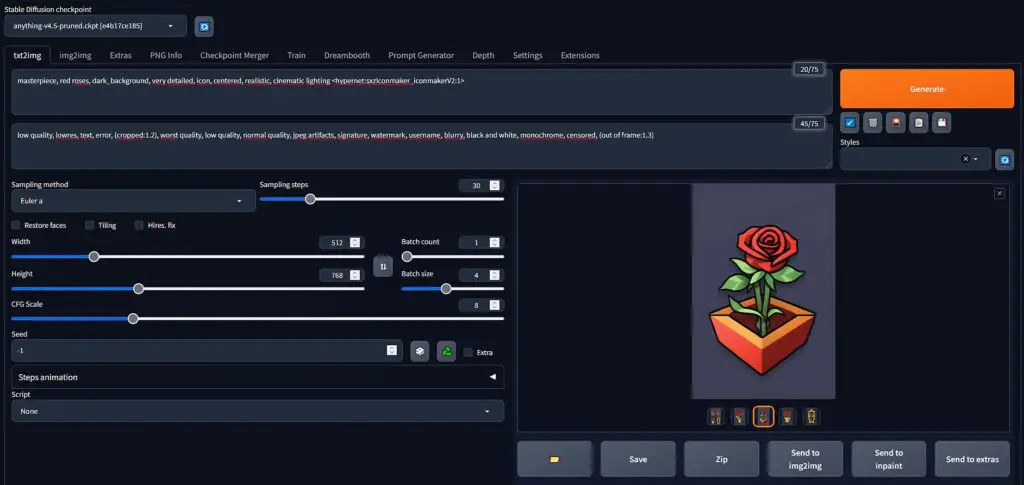

The 4090 will be able to grant you near instant generations when it comes to most basic locally hosted LLM models with the right configuration, quick image generation with local Stable Diffusion based image generation software, and great performance when it comes to model training and fine-tuning.

Performance-wise, it’s the second best card on this list. Cost-wise, well, some things never change. Regardless, it wouldn’t be right not to follow up with the RTX 4090, which is currently the second most sought-after NVIDIA GPU on the market. With that said, let’s now move to some more affordable options, as there are quite a few to choose from here!

4. NVIDIA GeForce RTX 4080 SUPER / RTX 4080 16GB

- Strong overall performance for local inference and image generation.

- 16GB of VRAM is still workable for many serious home AI workflows.

- The more relevant 4080-family variant to feature this year.

- Still capped at 16GB of VRAM.

- Often a weaker value proposition for local AI than used 24GB cards.

When it comes to the NVIDIA GeForce RTX 4080 SUPER, this is another 4080-family card worth taking a closer look at. Both the RTX 4080 SUPER and the original RTX 4080 come with 16GB of GDDR6X VRAM, but the SUPER is the refreshed version of the two and therefore the better default reference point for all of you still looking at this tier.

For local AI, the main question here is not whether the RTX 4080 SUPER is fast enough, because it absolutely is. The bigger question is whether 16GB is enough for the kind of workloads you want to run. For plenty of local inference, image generation, and mixed creative workloads the answer is yes, but once model size, context length, or heavier multimodal use starts to matter more, this is where 24GB-class cards begin to look more appealing.

I would still describe the RTX 4080 SUPER as a strong high-end 16GB choice rather than the best pure-value pick for local AI. If you find one for the right price, it is still a very capable option, but if pricing gets too close to used 24GB cards, I would usually start leaning toward those instead.

5. NVIDIA GeForce RTX 4070 Ti SUPER 16GB / RTX 4070 Ti 12GB

- 16GB of VRAM makes it much more flexible than the original 4070 Ti.

- A better-balanced choice for local AI than older 12GB cards in this class.

- Still offers strong overall performance in a more approachable tier.

- Still not a 24GB-class card.

- Used-market older cards can sometimes offer more VRAM value per dollar.

Now comes the time for the NVIDIA GeForce RTX 4070 Ti SUPER, which is the variant from this tier that makes the most sense to highlight here. The original RTX 4070 Ti was already a fast card, but its 12GB VRAM ceiling made it much easier to run into limits once you started stepping beyond lighter workloads.

That is why the RTX 4070 Ti SUPER is the better fit for this list. With 16GB of GDDR6X VRAM, it is simply more comfortable for local inference, bigger models, longer contexts, image generation, and more demanding mixed AI workflows than its base version. The original RTX 4070 Ti is not suddenly a bad card, but the SUPER version is the one that is able to better match what local AI users actually care about.

So if you are shopping in this part of the market, I would frame it like this: the RTX 4070 Ti SUPER is the version to actively aim for, while the older 4070 Ti only really becomes interesting if you find it used at a meaningfully lower price. Now let’s move on to the 3xxx-gen cards.

6. NVIDIA GeForce RTX 3090 Ti 24GB

- Best price to performance ratio.

- 24GB of VRAM keeps it relevant for larger local models and heavier workflows.

- Still a relatively recent and very much relevant card.

- Many great second-hand deals.

- Not as powerful as the 4xxx series.

- The 24GB of VRAM make it effectively more expensive than the 4070 Ti (but it’s well worth the cost).

Here it is! NVIDIA GeForce RTX 3090 Ti, is definitely my winner when it comes to the most cost-effective and most efficient GPUs for local AI model training and inference this year. Here is why.

When it comes to performance, the 3090 Ti is very close to the 4070 Ti all while having substantially more VRAM on board (12GB vs 24GB) and being much more cost-effective than the NVIDIA 4xxx series, especially if purchased on the second-hand market (once again, keep an eye on the Ebay listings).

You can learn more about the 3090 here: NVIDIA GeForce 3090/Ti For AI Software – Is It Still Worth It?

The best thing about that, is that many people upgrading to the newer GPUs this year have started to sell their older graphics cards among which the 3090 Ti was one of the most popular models from the previous gen. There are many great deals out there, especially when it comes to local marketplaces. The 3090 series are also mentioned in almost every online thread when the topic of best GPUs for local AI comes up. And for good reasons.

The 24GB of VRAM at this price point is still pretty impressive, and that is the real reason the 3090 Ti remains relevant for local AI today. It still hits a very practical balance between memory capacity, performance, and used-market pricing. If you want a 24GB GeForce-class card without paying flagship-level money, the 3090 Ti and the original 3090 are still among the most interesting options.

7. NVIDIA GeForce RTX 3080 Ti 12GB

NVIDIA GeForce RTX 3080 Ti alongside with the 3090 Ti lie very close together on the performance chart, albeit the 3080 features only 12GB of VRAM, and is a little bit more power hungry than the 3090 series.

Just because of that, this is a pretty short description. If you find the 3080 Ti used in a good state and for a really good price, go for it. If however getting a 3090/3090 Ti is not a problem for you, it would be a better decision in the end. You get twice the amount of VRAM and better performance after all. With that said, let’s get to the best budget choice on the list – the much older yet still quite reliable RTX 3060.

8. NVIDIA GeForce RTX 3060 12GB – If You’re Short On Money

- Cheap & reliable.

- 12 GB of VRAM for this price is a very nice deal.

- New ones can be found for less that $300 in a 2-fan configuration.

- Although this card is certainly not “slow” by modern standards, it’s still the least powerful one on this list.

- If you also plan to play AAA games using the 3060, it won’t last you as long as the remaining listed GPUs.

The NVIDIA GeForce RTX 3060 with 12GB of VRAM on board and a pretty low current market price is in my book the absolute best tight budget choice for local AI enthusiasts both when it comes to LLMs, and image generation.

I can already hear you asking: why is that? Well, the prices of the RTX 3060 have already fallen quite substantially, and its performance as you might have guessed did not. This card in most benchmarks is placed right after the RTX 3060 Ti and the 3070, and you will be able to run most 7B models and some 13B models with moderate quantization on it with decent text generation speeds. With right model chosen and the right configuration you can get almost instant generations in low to medium context window scenarios!

Once again, if we’re talking about the absolute lowest price GPU you could use for training and fine-tuning smaller models on your PC, getting the RTX 3060 is most probably the answer for you. Also, as always check out the latest second-hand deals for this card over on eBay – you can find an even better offer this way.

Hey, What About AMD Cards? – The Promised Addendum

AMD cards are no longer as easy to dismiss for local AI as they were a year or two ago. NVIDIA still has the broadest plug-and-play software compatibility, so it remains my default recommendation. But in 2026, Radeon cards such as the RX 7900 XTX and RX 9070 XT are viable for local AI if you are willing to work within ROCm and Vulkan-based workflows on cards without CUDA support.

Ollama officially supports many modern Radeon GPUs, AMD’s ROCm compatibility matrix includes current consumer cards, and ComfyUI Desktop now has official AMD ROCm support on Windows. So my real position now is not “avoid AMD”, but “buy NVIDIA for the smoothest experience, buy AMD if price-to-VRAM matters and you do not mind a bit more setup”.

You might also like: Best GPUs For Local LLMs (My Top Picks – Updated)