When you think about graphics cards capable of efficiently running different kinds of local AI software from LLM hosting backends, through voice cloning apps, to Stable Diffusion WebUIs, you probably think of hardware you can’t really afford. What if I told you that there are some cheaper options out there which, while they may sometimes require a little bit more tinkering to set up, can still be purchased without breaking the bank? Here are all of my data-backed recommendations divided into two main categories – under $600, and under $1000.

Updated for March 2026: This guide was re-checked against current GeForce, Radeon, Intel Arc, Ollama, ROCm, and Vulkan support. The main list was refreshed with newer budget cards like the RTX 5060 Ti 16GB, RTX 5070, Radeon RX 9070, and Radeon RX 9060 XT.

This website is reader-supported and is a part of the AliExpress Partner Program, Amazon Services LLC Associates Program and the eBay Partner Network. When you buy using links on our site, we may earn an affiliate commission.

The Most Important Specs For AI (And The VRAM)

As I’ve already covered the most important graphics card specs for AI in my main article containing all of the very best GPUs for local LLMs this year, I won’t be repeating much of that here. However, I’ll give you a very quick rundown of the most important GPU parameters that matter for local AI workflows.

You might be surprised, but the things that matter the most here are in that exact order: your chosen GPU’s support for standards which your selected AI software makes use of (like CUDA and ROCm), the amount of video memory (VRAM) on the card and the memory bandwidth, and only then the max clock speed.

So yes, large amount of VRAM is pretty much the most important thing to look out for when it comes to training and fine-tuning AI models locally as well as for local LLM inference, as long as you’re sure that you can actually get your chosen GPU to work with your project or particular piece of software, which can sometimes be somewhat challenging on non-NVIDIA GPUs (more on that later).

More VRAM can let you keep more of the workload on-GPU, which often makes workloads run faster. Also, in the LLM field, it lets you run larger, higher quality models.

A large amount of VRAM is also still very useful for local AI image generation, especially for newer diffusion and video models, but once a model fits in memory, its speed is then driven heavily by backend support, compute throughput, and memory bandwidth.

Maximum clock speed, while important, in most cases doesn’t really matter that much on the latest hardware. Most graphics cards released in the last three years or so will almost always be fast enough to satisfy your needs in the case of most local AI workflow contexts.

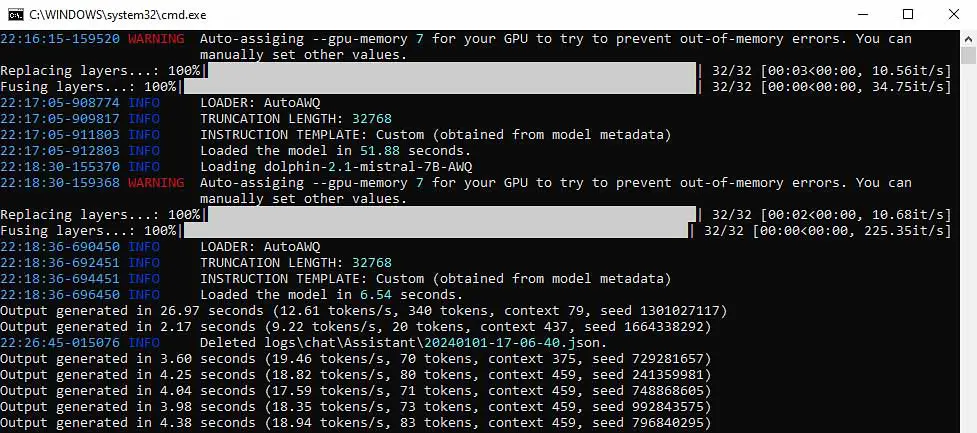

Are you interested in what 10 t/s or 50 t/s LLM text generation speeds look like in practice? Check out our simulator tool here: LLM Tokens-per-Second (t/s) Generation Speed Simulator

Benchmarks And Different Use Cases

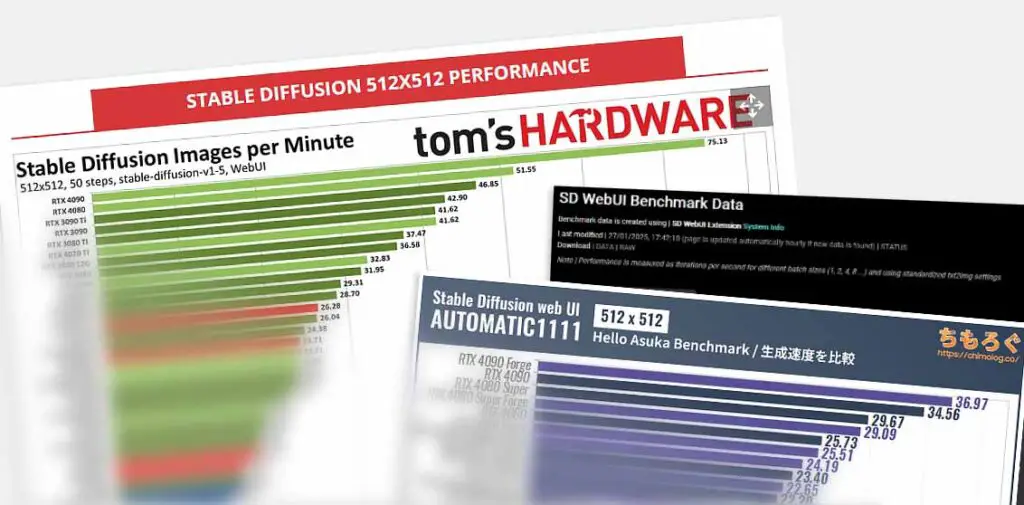

There exist quite a few useful AI benchmark resources online, for example ones showing local Stable Diffusion performance of different GPUs here. These can easily show you the general speed differences between different modern graphics cards.

Then there are some more, like for instance this one comparing the new Intel Arc B580 and the NVIDIA RTX 3090 in the context of local LLM training. When comparing any two GPUs together and looking for appropriate benchmarks, both Google and various online forums with user reports are your best friends.

Remember that the overall max speed differences between different cards will always matter to varying degrees, depending on the type of software you’re planning to use and your selected preferred workflows (working with diffusion models, LLMs, voice cloning, model training in any of these categories), as well as on the support for different technologies on your chosen GPU (such as CUDA on NVIDIA cards, ROCm for AMD GPUs, and so on).

Of course, things such as efficient cooling and overall reliability of your chosen GPU model are also important. That, however, is the case when getting a graphics card for pretty much any purpose, including gaming, and more complex productivity use cases such as 3D modelling and video editing.

With that said, after only briefly touching upon the software side of things, let’s quickly talk about the topic of software support when it comes to different GPU brands. This is arguably the thing that should be the most important for you when considering purchasing a non-NVIDIA GPU to save yourself some money in the process.

NVIDIA vs. AMD vs. Intel (Important Notes)

NVIDIA is the current leader when it comes to GPUs bought for local AI-related tasks, and that is mainly because of its CUDA framework, which all NVIDIA cards support natively and which AMD and Intel GPUs do not. Across a large chunk of popular AI software, CUDA is still the best-supported path.

As most developers of local LLM software, Stable Diffusion WebUIs, and many similar tools are prioritizing the CUDA framework when building and optimizing their projects, NVIDIA cards will generally be the ones that work best with such software out of the box. Still, support for AMD and Intel is much better than it used to be, so they are no longer fringe options in every workflow.

So, NVIDIA graphics cards have native support for CUDA, which makes them pretty much a “plug-and-play” solution for local AI software in many cases. AMD and Intel GPUs do not support CUDA natively, so they rely on different software stacks, runtimes, and backends depending on the exact app and operating system you plan to use.

And so, AMD cards are compatible with their own ROCm software stack and have optional support for ZLUDA, which can sometimes help with certain CUDA-targeted workloads, but is not a universal fix for CUDA-only software.

Intel on the other hand supports a traditional set of standard APIs (OpenGL, OpenCL, Vulkan, DirectX), and in practice also benefits from newer AI-focused paths such as SYCL, oneAPI, and upstream PyTorch GPU support. That still does not make Arc the default choice for every AI app, but it does make it much more relevant than older guides might suggest.

What It Looks Like In Practice – Non-NVIDIA GPUs

The bottom line here is: most local AI software is primarily developed with CUDA in mind, and support for other technologies like ROCm, DirectML, Vulkan Compute, SYCL, or OpenCL is often secondary and may or may not be implemented equally well by the developer of a particular program.

When purchasing a non-NVIDIA GPU, be mindful of what software you plan to use with it, check its compatibility status carefully, and in some cases be prepared for more tinkering during the software setup, less frequent updates and possible performance issues along the way. AMD and Intel can offer excellent value, but they make the most sense when you already know which apps, backends, and operating systems you want to run.

You might also like: Do You Really Need CUDA For Local LLMs? – Here Are The Alternatives

Your experience can vary greatly depending on the programs and workflows you have in mind, as well as the specific GPU model you’ll decide to get.

In the end, though, if you want to spare yourself some troubleshooting and compatibility issues, or you are not yet sure what type of AI-related workloads you will be using your GPU for, it may still be better to go for an NVIDIA graphics card despite their generally higher average market prices. YMMV.

These are all the things you should know before taking a look at the list below. Done reading? Let’s get to the main part.

Top 11 Affordable GPUs for LLMs and AI Software (Budget Picks Under $1000 & $600)

As promised, here is the full list of cards that, in my opinion, make the most sense to consider when looking for cheaper GPUs for running different types of local AI software on a limited budget. Enjoy!

Options Under $1000

1. NVIDIA RTX 5070 12GB

NVIDIA RTX 5070 12GB is one of the cleaner current-gen options you have when looking for a budget GPU for local AI tasks. The price of a new RTX 5070 is naturally a little bit higher than that of an RTX 5060 Ti, and while it features 4 GB of VRAM less, it does offer more raw speed, which in some contexts can make it the more attractive choice of the two.

In the end, there are many people who swear by the larger amount of slower video memory on the RTX 5060 Ti 16GB, which can give you some advantages for instance for loading larger LLMs, and there are also many users that claim that the faster RTX 5070 is a much better choice. This, however, as already stated, will depend on your particular use cases.

2. AMD Radeon RX 9070 16GB

The AMD Radeon RX 9070 16GB with its adequate amount of video memory for most basic AI-related tasks, and overall great performance for a card in this price range is one of the most interesting current-gen AMD options for local AI workflows.

Provided that you’re sure you can use an AMD graphics card in your particular AI software setup, this is a really good choice performance-wise for local LLMs. Just remember that your actual experience will still depend on the exact software stack, setup path, and backend you plan to use.

Check out also: 6 Best AMD Cards For Local AI & LLMs This Year

Remember that all of the quirks of both AMD and Intel Arc graphics cards in AI-related workflows that I’ve mentioned above still stand. Be mindful of them when purchasing a non-NVIDIA GPU for any kind of AI-related work.

3. NVIDIA RTX 4070 12GB

NVIDIA RTX 4070 12GB is another option you have when looking for a budget GPU for local AI tasks. The price of a new RTX 4070 is now naturally much more interesting when you can find it discounted or second-hand, and while it features 4 GB of VRAM less than a card like the RTX 5060 Ti 16GB, it has much higher memory bandwidth, which in some cases can make it much more efficient than the smaller cards with wider memory limitations.

4. AMD RX 7800 XT 16GB

The AMD RX 7800 XT 16GB with its adequate amount of video memory for most basic AI-related tasks, and overall great benchmark scores is still one of the better cards from the previous AMD Radeon GPU generation.

Provided that you’re sure you can use an AMD graphics card in your particular AI software setup, this is a really good choice performance-wise for instance for local LLMs, although just like with the other Radeon cards, your actual experience will depend on the exact software stack, setup path, and backend you plan to use.

Check out also: 6 Best AMD Cards For Local AI & LLMs This Year

5. NVIDIA RTX 4060 Ti 16GB

The NVIDIA RTX 4060 Ti 16GB is still a card that deserves a mention here, and although it’s not the most efficient or the fastest one from the 4th generation of NVIDIA GPUs, it’s one of the most popular ones. While the RTX 4060 Ti has 2x lower memory bandwidth (288 GB/s) than the NVIDIA RTX 4070 (504 GB/s), it does feature 4GB more VRAM on board. The choice between these two boils down to two things: the price, and how much you care about faster memory speeds in your specific use case.

Granted, now that cards like the RTX 5060 Ti 16GB are around, the 4060 Ti isn’t the obvious default pick anymore. If you can find it for the right price, it’s absolutely not a bad option. And of course, if you can find a used RTX 3090 or a 3090 Ti, that would be a much better choice here in most cases.

6. AMD RX 6800 XT 16GB

The AMD RX 6800 XT 16GB is a great pick if you’re considering getting an AMD GPU and you want something that’s less expensive but reliable in terms of processing speed and with plenty of VRAM on board. Local LLM hosting apps like KoboldCpp have been proven to work with this one without much trouble and with reasonable speeds, and it also does pretty well in terms of ROCm deep learning benchmarks.

The NVIDIA equivalent of this card, when it comes to the overall place it occupies on a budget list today, would be something closer to the aforementioned NVIDIA GeForce RTX 5060 Ti 16GB than the much older comparisons people used to make. If you want to save some money and your use case does make using an AMD card a viable strategy, it’s yet another one that you can seriously consider.

Options Under $600

7. NVIDIA RTX 5060 Ti 16GB

The NVIDIA RTX 5060 Ti 16GB is one of the newer cards on our list, and in my opinion the clearest new-retail NVIDIA budget recommendation for local AI workflows right now. While it’s not meant for the heaviest workloads imaginable, it does give you 16GB of VRAM on board in NVIDIA’s current mainstream lineup, which is exactly what makes it such an interesting successor to cards like the RTX 4060 Ti 16GB.

Overall, this is one of the better choices if you’re looking for a recent card with CUDA support without crossing the $600 mark and venturing into the area of more expensive NVIDIA GPUs. Now let’s move on to the next option from AMD.

8. AMD Radeon RX 9060 XT 16GB

The AMD Radeon RX 9060 XT 16GB is another great pick if you’re considering getting an AMD GPU and you want something that’s less expensive but still current-gen, reliable in terms of processing speed and with plenty of VRAM on board.

For supported software stacks, this one has a really strong case as one of the more interesting price-to-VRAM options on the current market. And it sits just below the RX 9070 XT (the second best GPU) in AMD’s current RX 9000 lineup

9. NVIDIA RTX 3060 12GB

While the NVIDIA RTX 3060 12GB is by no means a solution for a futureproof setup, it’s a card that’s arguably the most affordable NVIDIA GPU option for quickly getting into local AI, with sufficient VRAM for running smaller large language models locally, and with enough horsepower for relatively quick local image generation.

What makes this one even better is that you can often find it used for even lower prices (for example here, over on eBay). If you need an NVIDIA GPU, don’t want to break the bank, and are not bothered by not having the most powerful hardware available, you won’t be disappointed with this one.

10. Intel Arc B580 12GB

The Intel Arc B580 offers 12GB of video memory and Intel’s latest GPU architecture. With very competitive pricing and promising benchmark scores, this one is among the newest and most commonly explored alternatives to both NVIDIA and AMD graphics cards, both in the context of productivity and gaming, and local AI software workflows. Here you can see it compared directly to the NVIDIA RTX 3090 in an LLM training task benchmark with the use of PyTorch.

If you want to know way more about Intel Arc GPUs for local AI, you can find much more info here: Intel Arc B580 & A770 For Local AI Software – A Closer Look

This still relatively new GPU from the Intel “Battlemage” lineage is widely discussed online both in terms of its LLM inference capabilities, and performance in local image generation with Stable Diffusion and FLUX.

Both the solid performance and the very reasonable prices of the new Intel graphics cards make this one really worth considering. Once again, remember to check your software compatibility for the Intel Arc GPUs before deciding to get one of these.

Now let’s take a look at an older, but equally reliable and adequate GPU from the “Alchemist” series with a little bit more video memory on board.

11. Intel Arc A770 16GB

Intel Arc A770 16GB, being the older and slightly less powerful option from the Intel GPU lineup with a larger video memory pool, is also an excellent choice for a budget GPU for local AI use cases. Falling behind the B580 in terms of overall performance (about 10-20% performance loss), it does feature an extra 4 GB of VRAM if you’re in need of that.

Paired with its price, which is just as good as you might expect, it’s a very strong contender in the landscape of the best cheap GPUs for local machine learning tasks. As always, be mindful of the limits imposed by Intel’s ecosystem, which might in some cases be incompatible with the software that you’re set on using.

Bonus: A Used NVIDIA RTX 3090/Ti 24GB

I wouldn’t be myself if I didn’t include the NVIDIA RTX 3090 / Ti on this list, even as a bonus card at the very end. While the cost of this one doesn’t quite fit in our “budget” price brackets (you can see the current prices of the used units here over on eBay), this card is still among the very best GPU choices for local AI workflows this year.

24 GB of VRAM, fast memory speeds and great performance when it comes to both gaming and productivity tasks are only a few things that make this card worthwhile. If you can find it second-hand for a good price and in a good state, I wouldn’t think twice. The main reason it still matters is simple: 24GB of VRAM changes what you can comfortably run, both in local LLM inference and heavier image or video generation workflows.

You can read much more about this one here! – NVIDIA GeForce 3090/Ti For AI Software – Still Worth It?