Ever wondered what 60 tokens per second (t/s) speed really looks like when a local LLM is generating text with one of the recent decent GPUs in use? We talk about inference speed all the time, but when you’re reading about speeds such as 10 or 20 t/s, it’s often just an abstract number. If you want to get a real feel for how fast (or slow) different token speeds are, this visualization tool is for you.

After using the simulator, feel free to participate in our quick anonymous poll about what token speed you deem acceptable for your particular use case. Consider your most common tasks (chat, roleplay, writing, coding). At what speed does the experience become reasonably comfortable to work with, without feeling like you’re constantly waiting for the model? Thank you for helping us collect some interesting data!

You might also like: Top 7 Best Budget GPUs for AI & LLM Workflows

LLM Tokens per Second Simulator Tool

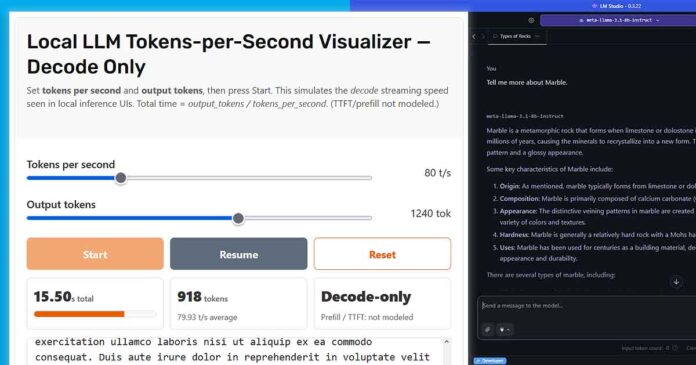

Local LLM Tokens-per-Second Visualizer — Decode Only

Set tokens per second and output tokens, then press Start. This simulates the decode streaming speed seen in local inference UIs. Total time = output_tokens / tokens_per_second. (TTFT/prefill not modeled.)

This self-contained tool runs entirely in your browser.

The LLM Tokens per Second Community Poll

How Does This Work? – t/s Text Generation Speeds Explained

Before we dive in, let’s quickly clarify what we’re talking about. When a Large Language Model generates a response, it doesn’t happen all at once. The process has two main phases:

- Prefill (or prompt processing): This is the initial “thinking” phase where the model processes your entire prompt. The time this takes is often called the Time to First Token (TTFT). It can be slow, especially with long prompts.

- Decode (or token generation): This is the phase where the model generates the response, one token at a time. The speed of this phase is measured in tokens per second (t/s). This is the streaming text you see appearing word-by-word.

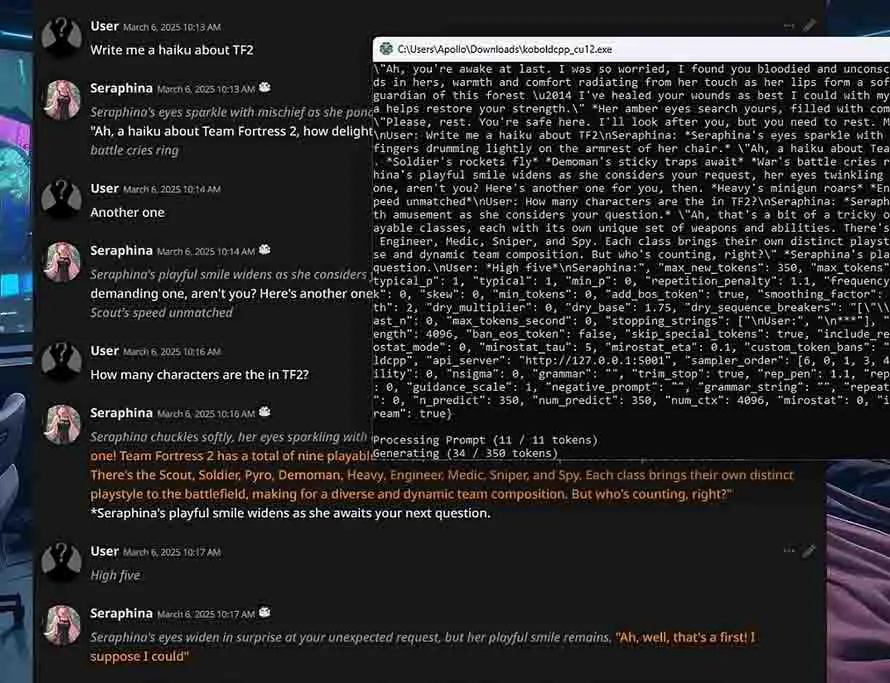

Our simulator focuses exclusively on the decode phase, as this is the part we perceive as the model’s “typing speed.” In a real-world application like SillyTavern or LM Studio, the initial delay before the first word appears (TTFT) can be significant, especially with a large context window. TTFT is often proportional to prompt length or model size.

Our tool skips this “thinking” part to focus solely on the streaming output speed, but it’s an important factor to keep in mind when evaluating overall performance. A higher t/s rate means a smoother, faster, and more responsive chat experience.

Example Attainable t/s Speeds on Different Hardware Setups

When evaluating local large language model performance, token generation speed is one of the most important factors influencing how responsive and natural the interaction with the model feels. The actual tokens-per-second rate you can expect depends heavily on your hardware and how well your model and software setup are configured.

- 5–15 tokens per second: Common for local CPU-only inference or older, less powerful hardware, where real-time interaction with the model can feel noticeably slow.

- 20–50 tokens per second: Achievable with mid-range GPUs (such as an NVIDIA RTX 3060) running 7-billion parameter models, depending on inference software settings, quantization, and software frameworks used. According to the official OpenAI API documentation, 50 t/s is the speed the GPT-5 API is supposed to serve tokens at 99% of the time.

- 50–100+ tokens per second: Possible with high-end GPUs like the NVIDIA RTX 4080 or RTX 4090 when running highly smaller ~7B-12B models, often leveraging advanced quantization and efficient inference libraries. Speeds higher than 200 t/s are typical for smaller models running on corporate cloud infrastructure such as the Gemini 2.5 Flash-Lite, and others you can browse through for instance on the Artificial Analysis LLM leaderboard.

Speeds can vary widely based on model size, architecture, software optimizations, batch size, and the context window length.

Keep in mind that these speeds reflect the token generation phase only, not including the initial processing time required to prepare the prompt. Optimizing your entire inference pipeline and selecting hardware & software combos that fit your typical workload are key to achieving a smooth and productive user experience.

If you want to know more about the latest GPUs that can grant you flawless experience even with some larger locally hosted open-source large language models, we have a full list of the best graphics cards for local LLM inference right here: Best GPUs For Local LLMs (My Top Picks)